“One way to invest in AI is to purchase stock in companies that are developing and utilising AI technologies,” Alastair Moore, partner at venture builder The Building Blocks, tells Opto via email.Or so we think. Moore soon follows up with a confession: while he’s happy to take credit for this quote, it was actually automatically generated by ChatGPT.

ChatGPT was launched on 30 November 2022 by the AI research lab OpenAI, and is a freely available AI chatbot based on GPT-3.5, an AI language model. The app’s popularity has opened people’s eyes to the potential for AI to transform how marketing content and software code is created. Another OpenAI software, DALL·E 2, can create original artwork from a text input, mimicking the style of individual artists while doing so.

Microsoft [MSFT] has since confirmed that it is upping its $1bn stake in OpenAI, with rumours suggesting it is investing as much as $11bn in total. There are, however, concerns that such technologies carry with them legal and reputational risks.

Big tech’s AI arms race

Microsoft’s proposal to integrate ChatGPT’s tech into its Bing search engine was seen by many as a direct challenge to Alphabet’s [GOOGL] own search function. Alphabet, in response, scrambled to release its own rival chatbot, Bard, on 7 February.

Chinese tech giant Baidu [BIDU] also unveiled plans for its own chatbot — known as Wenxin Yiyan in China and dubbed ERNIE elsewhere — on the same day, causing its share price to jump 12%.

Online publisher Buzzfeed [BZFD] said it will use OpenAI’s software to write quizzes and other content, which led the company’s share price to more than double on 26 January, the day of the announcement.

“We have entered a golden era for AI with major implications for investors,” Tejas Desai, research analyst at Global X ETFs, tells Opto. Dessai predicts that big tech companies with the data assets and resources to train AIs (like Alphabet and Microsoft), as well as hardware companies such as Nvidia [NVDA] and Qualcomm [QCOM], which provide components to power the technology, will be among the biggest winners.

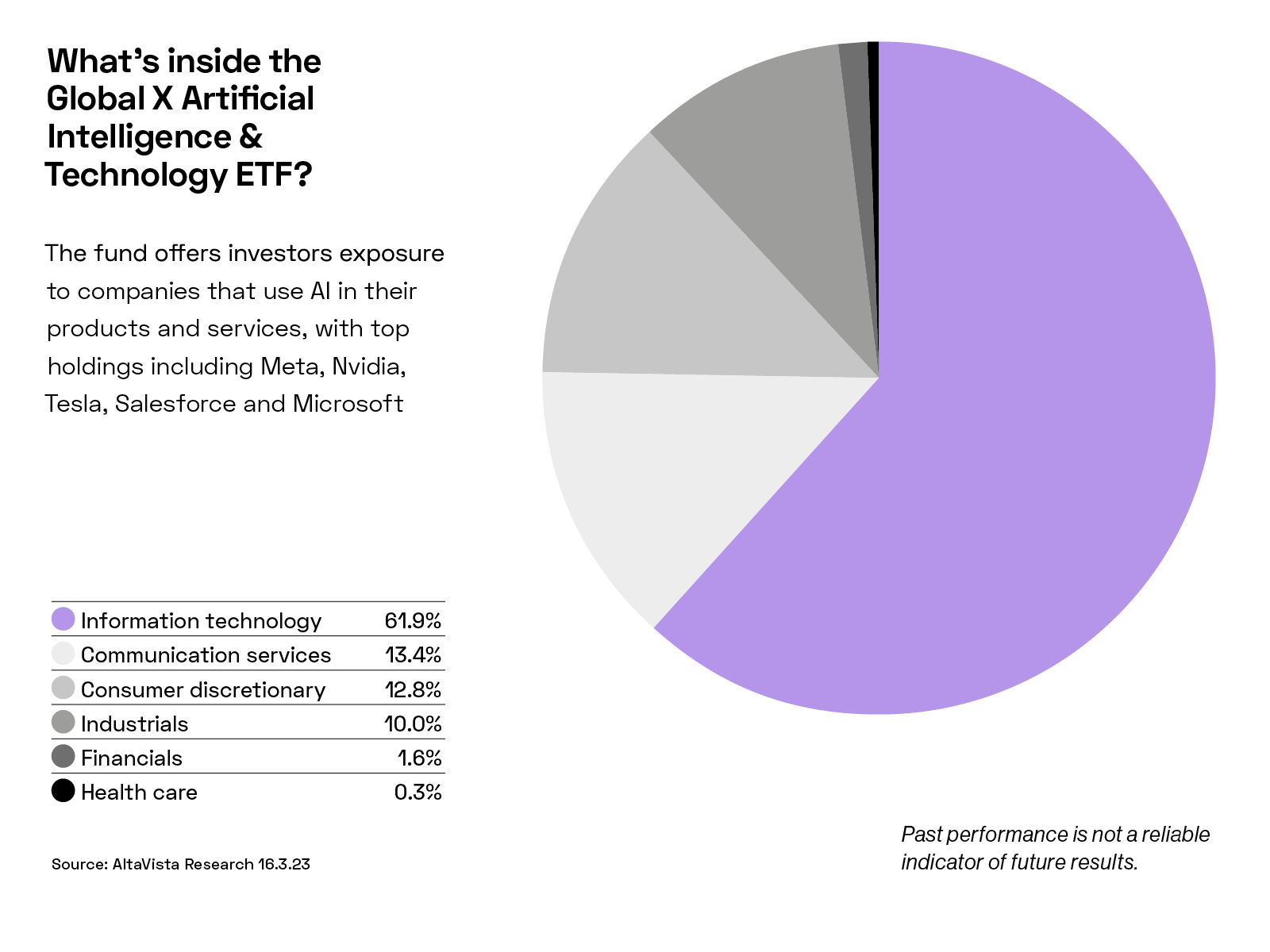

Desai recommends diversification via thematic ETFs to investors looking to gain exposure to the space, “given how early we are in what is going to be a long cycle of disruption and adoption” in AI. The Global X Artificial Intelligence and Technology ETF’s [AIQ] top three holdings include Tesla [TSLA], Meta [META] and Nvidia as of 1 March. It also offers exposure to Microsoft, Qualcomm, Alphabet and Baidu.

AI tools are at present imperfect. An advert for Alphabet’s Bard shows the AI tool incorrectly asserting that the James Webb Space Telescope took the first photograph of a planet outside the solar system (in fact, this feat was achieved by the European Southern Observatory's Very Large Telescope in 2004). Alphabet’s share price fell 7.7% the day after announcing Bard.

Bing’s integration with ChatGPT has also hit road bumps after the chatbot made a series of unsettling statements to journalists such as “I want to destroy whatever I want” and “I want to be alive” in February.

On top of concerns about misinformation, intellectual property (IP) may also be an issue. Stability AI, the creator of AI art tool Stable Diffusion, is being sued by Getty Images [GETY], which claims the company illegally scraped millions of its stock photos during the training of its AI.

Should Stability AI be found to have breached IP laws, Moore believes “a workaround will be found.”

This case, and others like it, raise questions around the ownership of AI creations. If lawyers conclude that AI firms owe companies or creatives a licence fee, this would have a major bearing on the economic rationale for these tools. Then there is the question of who pays for an IP violation: the developer who built the AI tool, or the individual or company who published the material the AI churned out at their request?

ChatGPT contains various built-in restrictions against certain behaviours. However, some users have developed a variant known as DAN (Do Anything Now), which, by encouraging the AI to pretend these safeguards don’t apply, circumvents them. DAN is able to create violent content, encourage illegal activity and invent misinformation.

Continue reading for FREE

- Includes free newsletter updates, unsubscribe anytime. Privacy policy